For beginners in AI and deep learning, choosing the right Nvidia GPU is crucial. The best choice balances performance and cost.

Getting started with AI and deep learning can be overwhelming. Choosing the right GPU can make a big difference. Nvidia offers several options, each with unique features. As a beginner, you need a GPU that is powerful yet affordable. This introduction will help you understand which Nvidia GPU is best suited for your needs.

We’ll explore key aspects like performance, ease of use, and budget. By the end, you’ll have a clear idea of which GPU to start your AI and deep learning journey with.

Why You Need an NVIDIA GPU for AI & Deep Learning?

Nvidia GPUs are the gold standard for AI for several reasons:

- Parallel processing: Ideal for training deep learning models that require massive matrix computations

- CUDA support: Nvidia’s proprietary GPU computing platform is widely used in AI libraries (TensorFlow, PyTorch, etc.)

- Tensor Cores: Designed for faster matrix operations, which are essential for training neural networks

- Great community and software support: From cuDNN to TensorRT, Nvidia provides an end-to-end ecosystem

Importance For AI And Deep Learning

AI and deep learning need vast amounts of data to train models. Nvidia GPUs can process this data quickly. This speeds up the training process. Faster training means faster results. Efficient results can lead to better performance in AI models.

GPUs are also more efficient than CPUs. They can perform many calculations at once. This parallel processing is critical for AI tasks. It allows for more complex models and more accurate predictions.

Overview Of GPU Options

Nvidia offers several GPU options for beginners. The Nvidia GeForce RTX series is popular. These GPUs are affordable and powerful. They are suitable for many AI tasks. The RTX 3060 is a good starting point. It balances cost and performance well.

Another option is the Nvidia Tesla series. These GPUs are designed for high-performance computing. They are more expensive but offer superior performance. The Tesla K80 is a good choice for those who need more power.

Lastly, the Nvidia Quadro series is worth considering. These GPUs are built for professional use. They offer excellent performance and reliability. The Quadro RTX 4000 is a solid option for beginners. It provides a good mix of power and cost.

Key Factors to Consider as a Beginner

Before selecting a GPU, beginners should evaluate:

- Budget: Entry-level GPUs cost under $500, while high-end options can exceed $2000+

- VRAM (Video RAM): Determines how large your models and datasets can be. At least 8 GB of VRAM is recommended.

- CUDA cores: More cores = more parallel computations

- Tensor cores: For faster AI model training

- Power consumption & compatibility: Ensure your PC build can support the GPU

Budget Considerations

Choosing the right Nvidia GPU for AI and deep learning beginners involves considering the budget. Investing in the best GPU within your budget can significantly impact your learning curve and project outcomes. Let’s break down the budget considerations for beginners.

Entry-level GPUs

Entry-level GPUs from Nvidia offer a good balance between cost and performance. The Nvidia GTX 1660 Super is a popular choice. It provides a decent amount of CUDA cores and VRAM. This makes it suitable for small to medium-sized projects. Another option is the Nvidia RTX 2060. This GPU offers enhanced performance with its Tensor Cores. It supports faster computations, which is beneficial for deep learning tasks.

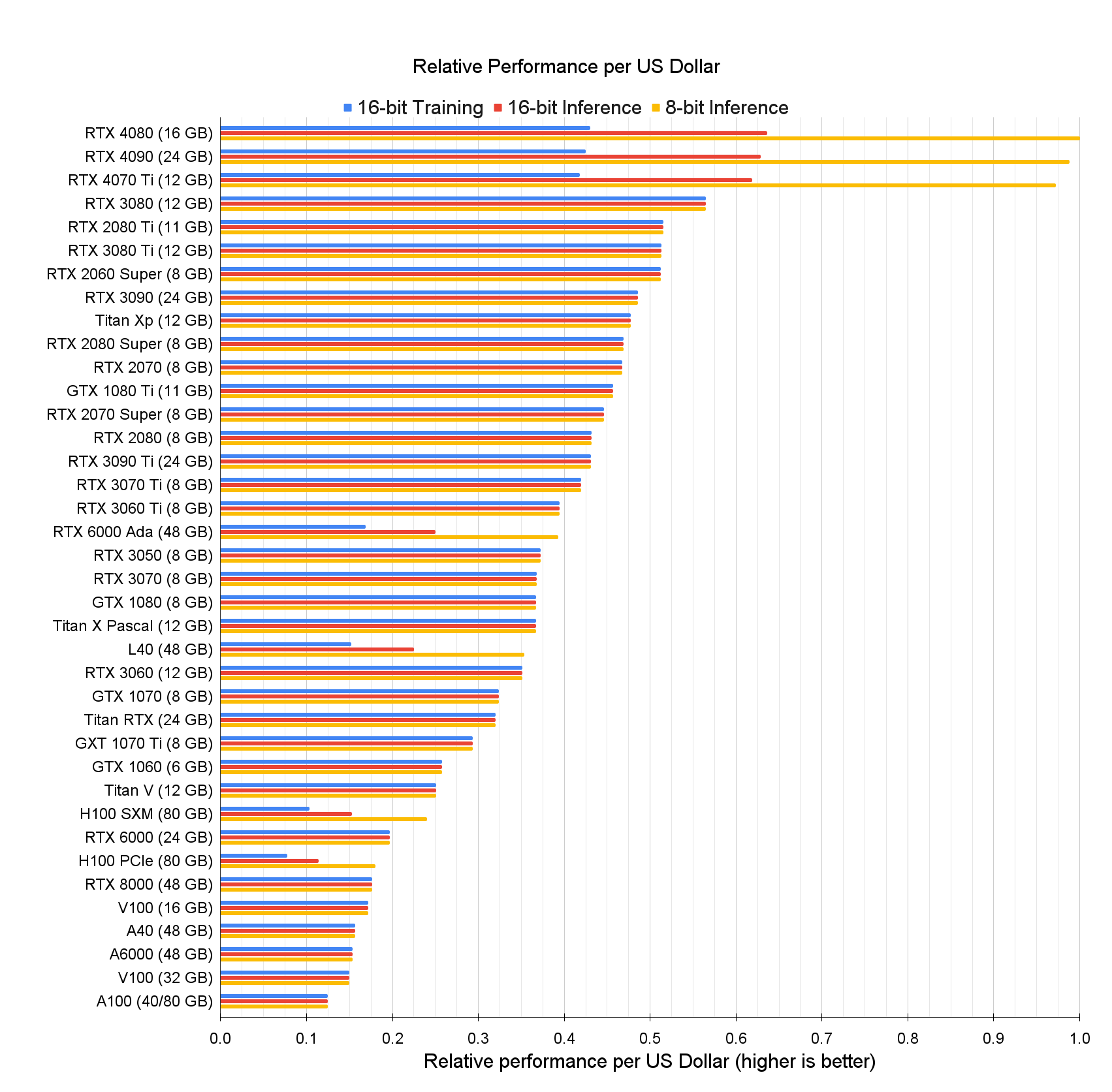

Cost Vs. Performance

Understanding the cost versus performance of GPUs is crucial. The Nvidia GTX 1660 Super is more affordable. It costs around $230. While it may not be the most powerful, it handles basic AI tasks well. The Nvidia RTX 2060, on the other hand, is slightly more expensive. It costs approximately $300. This extra cost brings better performance. The Tensor Cores in the RTX 2060 offer faster deep learning computations.

For those with a tighter budget, the GTX 1650 is an option. It costs around $150. It is suitable for very basic AI and deep learning projects. While not as powerful, it still provides a starting point for beginners. Weighing the cost against the performance helps in making an informed decision. Beginners should invest in a GPU that fits their budget while meeting their learning needs.

Performance Metrics

Understanding performance metrics is crucial in choosing the best Nvidia GPU for AI and deep learning. These metrics help evaluate how well a GPU handles tasks. They also give insight into the GPU’s efficiency. For beginners, focusing on key metrics ensures a balanced choice. Let’s explore some of these important metrics.

Cuda Cores And Tensor Cores

CUDA cores are essential for parallel processing. They allow your GPU to handle multiple tasks at once. More CUDA cores mean better performance in AI tasks. Tensor cores specialize in deep learning operations. They accelerate matrix calculations. This makes training AI models faster and more efficient.

Memory Bandwidth And Size

Memory bandwidth impacts how quickly data moves in and out of the GPU. Higher bandwidth means faster data processing. This is vital for large datasets. Memory size is equally important. It determines how much data the GPU can handle at once. Larger memory allows for more complex models and larger datasets. This is especially helpful for beginners working with deep learning.

Best Nvidia GPUs for AI and Deep Learning Beginners in 2025

1. Nvidia RTX 4060 Ti – Best Budget Option

- VRAM: 8 GB / 16 GB variants

- CUDA Cores: ~4352

- Tensor Cores: 3rd Gen

- Price: ~$399–$499

Why it’s great:

The RTX 4060 Ti strikes an excellent balance between price and performance. It’s perfect for beginners working on small to mid-sized deep learning projects like image classification, NLP fine-tuning, or LLM inference. It supports DLSS 3, FP16, and TensorRT for model acceleration.

2. Nvidia RTX 4070 Super – Best Value for Performance

- VRAM: 12 GB

- CUDA Cores: 7168

- Tensor Cores: 4th Gen

- Price: ~$599–$699

Why it’s great

The RTX 4070 Super is ideal for serious learners or hobbyists planning to train larger models or work on more advanced projects like GANs or transformer-based architectures. With 12 GB of VRAM, it supports more complex models and larger batch sizes.

3. Nvidia RTX 3090 – Best for Prosumer AI Learning

- VRAM: 24 GB

- CUDA Cores: 10,496

- Tensor Cores: 3rd Gen

- Price: ~$1,000 (used)

Why it’s great

Although it’s from a previous generation, the RTX 3090 still outperforms many newer GPUs in deep learning thanks to its massive VRAM. It’s excellent for training models on full datasets without hitting memory limits—perfect for those wanting to go beyond tutorials and dive into full-scale model training.

4. Nvidia RTX 4080 Super – Best Future-Proof Option

- VRAM: 16 GB

- CUDA Cores: 10,240

- Tensor Cores: 4th Gen

- Price: ~$999

Why it’s great

For beginners who want to invest in future-proof hardware, the RTX 4080 Super offers high-end performance and efficiency. It’s suitable for large-scale model training, 3D generative AI projects, and mixed precision computing using FP8/FP16.

5. Nvidia RTX A4000 (Workstation GPU)

- VRAM: 16 GB GDDR6 ECC

- CUDA Cores: 6144

- Tensor Cores: 3rd Gen

- Price: ~$1,100

Why it’s great

A professional-grade GPU at a manageable price, the A4000 is built for creators, researchers, and learners needing ECC memory, better thermal design, and reliability for long training sessions. Great for those working in academic or small-scale enterprise environments.

NVIDIA GPUs for Beginners

Several Nvidia GPUs were evaluated based on the above criteria, with a focus on their suitability for beginners. The following table summarizes the key options, including their specifications and suitability:

| GPU Model | VRAM | Memory Bandwidth | Tensor Cores | Price (Approx.) | Suitability for Beginners |

|---|---|---|---|---|---|

| NVIDIA GeForce RTX 4070 Ti Super | 16GB GDDR6X | 672GB/s | 264 (4th gen) | $550 | Excellent balance of performance and cost, ideal for mid-sized models, future-proof with 16GB VRAM |

| NVIDIA GeForce RTX 3060 | 12GB GDDR6 | ~360GB/s | 112 (3rd gen) | $300-$400 | Budget-friendly, suitable for basic tasks, but older architecture may limit performance for growth |

| NVIDIA GeForce RTX 3080 | 10GB GDDR6X | 760GB/s | 328 (3rd gen) | $996 | Good for large datasets, but 10GB VRAM may be limiting, and higher cost reduces affordability |

| NVIDIA GeForce RTX 4090 | 24GB GDDR6X | 1,008GB/s | 512 (4th gen) | $1,500-$2,000 | Overkill for beginners, high cost and power consumption, better for advanced users |

| Nvidia A40 | 48GB GDDR6 | Not specified | 336 | Several thousand | Professional-grade, overkill and expensive for beginners, designed for data centers |

The evaluation included insights from multiple sources, such as Tim Dettmers – Which GPU for Deep Learning?, which emphasized cost-effectiveness and memory requirements, and Cloudzy – Best GPU for Machine Learning, which highlighted the RTX 4070 as suitable for enthusiasts with medium-level needs. Additionally, Ankr – Top GPUs for AI suggested the RTX 3080 for budget-conscious beginners, while Easy With AI – The Best GPUs for AI and Deep Learning recommended the RTX 3060 for entry-level tasks.

Popular NVIDIA GPUs For Beginners

Choosing the right Nvidia GPU can be confusing for beginners in AI and deep learning. There are many options with different features and price points. This section will help you understand popular Nvidia GPUs for beginners.

Geforce GTX Series

The GeForce GTX series is a good starting point. These GPUs offer solid performance for entry-level AI tasks. The GTX 1660 and GTX 1650 are popular choices. They are affordable and can handle basic neural networks.

These GPUs do not have advanced features like Tensor Cores. But they are still effective for learning and small projects. You can run frameworks like TensorFlow and PyTorch on them without issues.

Geforce RTX Series

The GeForce RTX series is a step up from the GTX series. These GPUs have Tensor Cores, which boost AI calculations. The RTX 2060 and RTX 2070 are great for beginners. They offer better performance for training larger models.

RTX GPUs also support real-time ray tracing. This feature is not essential for AI, but it adds value for users interested in graphics. The RTX series is more expensive but worth the investment for serious learners.

Benefits Of Geforce GTXx Series

Choosing the right GPU is crucial for beginners in AI and deep learning. Nvidia’s GeForce GTX series offers a range of benefits that make it an excellent choice for newcomers. These GPUs strike a balance between price and performance, making them accessible and effective for those just starting out.

Affordability

The GeForce GTX series is known for its affordability. This makes it a great option if you’re on a budget. You don’t have to break the bank to get started with AI and deep learning.

For example, the GTX 1660 offers a good blend of cost and performance. It’s affordable compared to higher-end models, yet powerful enough to handle most beginner tasks.

Why spend a fortune when you can get a capable GPU at a fraction of the price? Think about the long-term savings and how you can reinvest in other essential tools.

Adequate Performance

While the GTX series may not be the top-tier in Nvidia’s lineup, it provides adequate performance for beginners. You can run popular frameworks like TensorFlow and PyTorch without significant issues.

Take the GTX 1060, for instance. It has enough power to perform tasks like training small neural networks and running inference models. It’s a solid starting point for anyone looking to dive into AI and deep learning.

Have you ever tried running a model on an outdated GPU? The struggle is real. With the GTX series, you can avoid these frustrations and focus on learning and development.

So, why not give the GeForce GTX series a try? It’s a cost-effective way to get your feet wet in the world of AI and deep learning. What’s stopping you?

Advantages Of Geforce RTX Series

The GeForce RTX Series from Nvidia offers many advantages for AI and deep learning beginners. These GPUs are known for their advanced features and exceptional performance. They provide a solid foundation for anyone starting their journey in AI and deep learning.

Advanced Features

The GeForce RTX Series includes advanced features tailored for AI tasks. One key feature is real-time ray tracing. This technology enhances the visual quality of simulations and models. Another important feature is Tensor Cores. Tensor Cores accelerate AI computations, making training models faster and more efficient.

Additionally, the RTX Series supports Deep Learning Super Sampling (DLSS). DLSS uses AI to boost frame rates while maintaining image quality. This is crucial for creating realistic training environments. The GPUs also offer CUDA cores, which improve parallel processing. This is essential for handling large datasets and complex calculations.

Better Performance

The GeForce RTX Series stands out for its impressive performance in AI and deep learning. These GPUs offer high memory bandwidth, which is vital for processing large datasets quickly. More memory means you can train bigger models without running into memory issues.

Another performance highlight is the improved cooling system. Efficient cooling allows the GPU to maintain high performance without overheating. This is important for long training sessions. The RTX Series also provides better power efficiency. Lower power consumption results in reduced operational costs, making them a cost-effective choice for beginners.

Overall, the GeForce RTX Series combines advanced features with top-notch performance. This makes them an ideal choice for AI and deep learning beginners.

Use Cases And Scenarios

Choosing the right Nvidia GPU for AI and deep learning beginners is crucial. Different GPUs serve different purposes based on your project needs. Understanding use cases can help you make an informed decision.

Small Projects

Small projects often involve limited datasets and basic models. Beginners working on these projects need a GPU that balances cost and performance. The Nvidia GTX 1660 Super is a good choice. It offers enough power for tasks like image classification and simple neural networks. This GPU is budget-friendly and easy to set up. It is also energy-efficient, which means lower running costs.

Learning And Experimentation

Learning and experimentation require flexibility. Beginners should look for a GPU that supports various frameworks. The Nvidia RTX 3060 is ideal for this purpose. It has good compatibility with popular AI libraries. Its Tensor Cores help speed up training times. This GPU can handle more complex models as you advance. It provides a good balance between price and performance.

With the RTX 3060, you can experiment with deep learning techniques. This includes transfer learning and fine-tuning pre-trained models. It is a solid choice for those keen on learning and growing in the AI field.

Future-proofing Your Investment

Choosing the right Nvidia GPU is crucial for beginners in AI and deep learning. The Nvidia RTX 3060 is a good choice due to its balance of performance and affordability. This GPU offers ample power for training models without breaking the bank.

When choosing the best Nvidia GPU for AI and deep learning as a beginner, you might be concerned about the longevity of your investment. Future-proofing your choice ensures that your GPU remains relevant and efficient for years to come. Let’s dive into the key aspects to consider for future-proofing your investment.

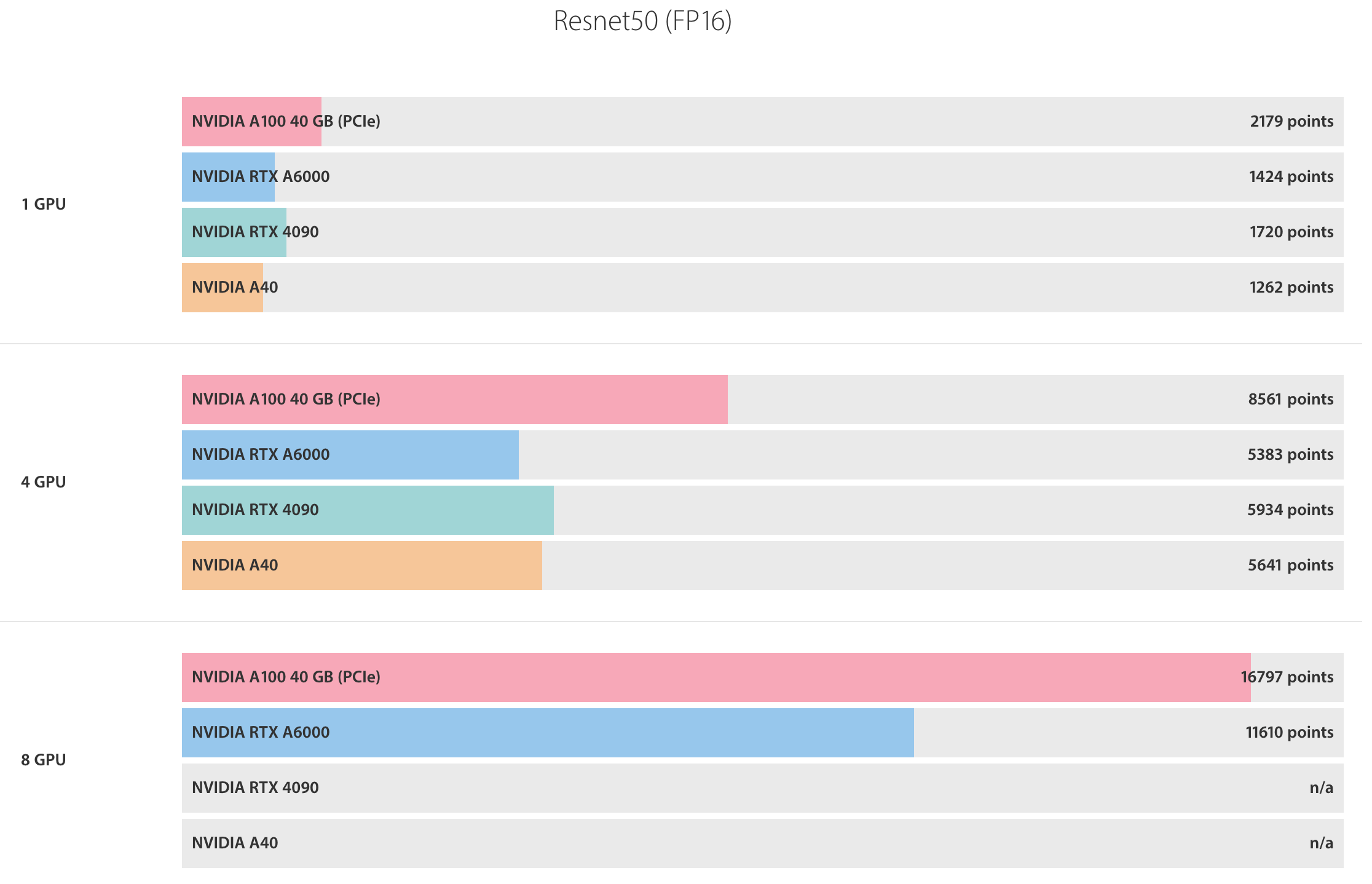

Scalability

A scalable GPU can grow with your needs. As you progress in AI and deep learning, your projects will become more complex. A scalable GPU helps you handle larger datasets and more advanced models without needing to buy new hardware.

Nvidia’s RTX 30 series offers excellent scalability. It supports a range of applications from basic models to more complex neural networks. Starting with an RTX 3060 might be ideal. It provides a balance between affordability and performance.

Upgrade Paths

Understanding upgrade paths is crucial. You want a GPU that fits into an ecosystem where upgrading is straightforward. Nvidia GPUs are renowned for their compatibility and ease of upgrading.

Consider the RTX 3060 Ti for better performance. It’s a step up from the 3060 and will handle more demanding tasks. If you find your needs growing, transitioning to an RTX 3070 or 3080 can be seamless.

How will you know when to upgrade? Monitor your GPU’s performance and the demands of your projects. If you notice lag or longer processing times, it might be time to consider an upgrade.

Future-proofing also involves software compatibility. Nvidia frequently updates its drivers and software to support new technologies. This ensures your GPU stays relevant and efficient.

Investing in an Nvidia GPU is not just about current needs. It’s about anticipating future demands and being ready to meet them. A thoughtful choice now can save you from frequent upgrades and ensure a smooth journey in AI and deep learning.

Tips for AI Beginners Choosing a GPU

- Don’t overinvest early – Start with a mid-tier card like the RTX 4060 Ti or 4070 to learn the ropes.

- Use Google Colab or Kaggle – These platforms offer free GPU access, perfect for practicing before buying.

- Consider used cards – GPUs like the RTX 3090 often go for 50% off second-hand and still pack a punch.

- Check software compatibility – Ensure the GPU supports CUDA 11+ and cuDNN for your chosen frameworks.

- Future-proofing matters – If you’re planning a career in AI, buying a 12–16 GB VRAM GPU now saves you later.

Frequently Asked Questions

What Is The Best Nvidia GPU For Deep Learning?

The best Nvidia GPU for deep learning is the Nvidia A100. It offers exceptional performance and efficiency for AI tasks.

Which GPU First For Deep Learning?

The NVIDIA GTX 1080 Ti is a popular choice for beginners in deep learning due to its affordability and performance.

Do You Need Nvidia GPU For AI?

You don’t need an Nvidia GPU for AI, but it is recommended. Nvidia GPUs offer better performance for AI tasks.

What Is The Minimum GPU For AI Training?

The minimum GPU for AI training is an NVIDIA GTX 1060 with 6GB VRAM. This GPU balances performance and affordability.

Conclusion

In conclusion, for AI and deep learning beginners as of April 12, 2025, the NVIDIA GeForce RTX 4070 Ti Super is likely the best choice due to its optimal balance of performance, memory, and cost. With 16GB of GDDR6X VRAM, powerful Tensor cores, and compatibility with all major AI frameworks, it provides everything a beginner needs to start their journey. While other options like the RTX 3060 or RTX 3080 exist, the RTX 4070 Ti Super offers better future-proofing and value, ensuring a smooth learning experience as you delve deeper into AI and deep learning.

Consider your budget and requirements. The RTX 3060 offers a good balance for newcomers. It provides strong performance at a reasonable price. The RTX 3090 is powerful but expensive.

- Best Budget: RTX 4060 Ti

- Best Performance per Dollar: RTX 4070 Super

- Best for Full-Scale Learning: RTX 3090 or RTX 4080 Super

- Best for Stability & Long Runs: Nvidia RTX A4000

Ultimately, the best Nvidia GPU for AI beginners depends on your goals, workload, and budget. For hobbyists and students, the RTX 4060 Ti offers tremendous value. For those who want to dive deeper, the 4070 Super and 3090 are reliable workhorses that can scale with your ambitions.

Beginners might not need its full potential. Evaluate your needs before investing. Start with a more affordable option. Upgrade as your skills and projects grow. Keep learning and experimenting to find what works best for you.

Want more tips on building your AI workstation, optimizing model training, or choosing the right deep learning tools? Subscribe to our blog for weekly updates on AI hardware and tutorials.